- Blog

- Wiring a bathroom fan and light to one switch

- Dungreed room no entrance

- Wavelet denoising easyhdr

- Page command controllermate

- Automation arraysync

- Run collective soul

- Math magic trick

- Att push to talk phones

- Chimex chicken

- Adobe photoshop elements review

- Cleanx back saver

- Trace my ip

- Manictime professional

- Kensingtonworks software

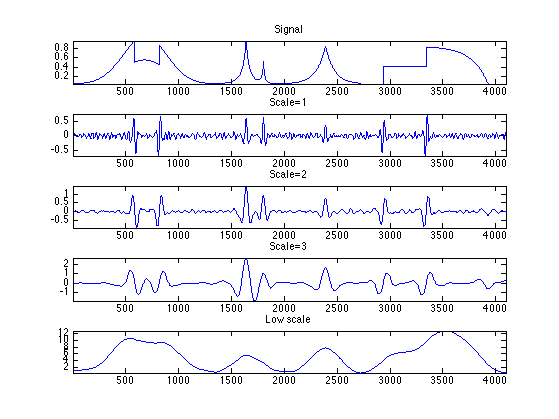

Short-time Fourier transform (STFT) is often used to analyze the speech on a time-frequency range. Speech is a time-varying signal, in which usually changes occur at syllabic rates of 10 times/sec and exceeds the fixed time intervals of 10–30 msec. The system performs speech enhancement in an end-to-end manner, different from most existing denoising methods only dealing with amplitude spectrum.

WAVELET DENOISING EASYHDR FULL

proposed an enhancement model of a full convolutional network (FCN) based on the original waveform. The full connection layer involved in deep neural networks (DNN) and convolutional neural networks (CNN) may not accurately describe the local information of the speech signal, especially for the high-frequency component. Moreover, the trainable parameters of CRN are much smaller. The proposed model is independent of noise and speaker. Tan and Wang combined the convolutional code-decoder (CED) and long short-term memory (LSTM) into the convolutional recurrent network (CRN) to achieve real-time monophonic speech enhancement. used both convolutional and recurrent neural network architectures to exploit local structures in both the frequency and temporal domains for speech enhancement. DNNs have been applied to speech recognition, speech denoising, and speech separation. Many training algorithms have been proposed to train a deep network. Deep neural networks (DNNs) contain multiple nonlinear hiding layers, showing great potential to capture the complex relationship between noises and clean speeches.

Deep learning also focuses on feature learning. In this way, the training of the model relies on a large number of data sets, highlighting the importance of big data for a complete and complex model. The current learning framework usually adopts a multilevel model. Meanwhile, it emphasizes the deep structure of the learning model. However, the constraints on computing power and the size of training data lead to the implementations of relatively small neural networks, limiting denoising performance.īy learning a deep nonlinear network structure, deep learning has the following advantages: achieving the approximation of complex functions, representing the distributed representation of input data, and demonstrating its powerful ability to learn data and essential characteristics from a few sample sets. As a nonlinear filter, the neural network was applied to this problem in the past, such as the early use of the shallow neural network (SNN) for speech-denoising study. It is difficult for these filtering methods to achieve effective signal-noise separation. Most of the filtering methods are limited to window-adding or masking operation in the frequency domain or time domain due to the strong time-frequency coupling between speech signals and noises. Several speech-denoising and speech-enhancement methods have been proposed based on the statistical difference between the speech and noise characteristics, including spectral subtraction, based estimation, Wiener filtering, subspace method, nonnegative matrix factorization (NMF), and minimum mean square error (MMSE). Speech denoising aims to reproduce clean speech from noise-polluted signals, which is crucial for various applications, such as automatic speech recognition (ASR) and hearing aids. These interferences greatly degrade the performance of the speech processing system and affect the quality of speech. In the actual environment, speech signals are inevitably affected by the noises from the surrounding environment, transmission media, and electrical noise inside the communication equipment. The experimental results showed that the method has a good denoising effect in the whole frequency band. The noise reduction effect in each frequency band was improved due to the gradual reduction of the noise energy in the wavelet-decomposition process. This method overcame the problem that the frequency and time resolution of the short-time Fourier transform could not be adjusted. Then, the denoised speech was obtained by the inverse wavelet transform. The denoised wavelet-decomposition vector was transformed back to the time domain by the output amplitude spectrum and the phase of the wavelet-decomposition vector. Besides, the regression network used the input of the predictor to minimize the mean square error between its output and input targets.

The output of the network was the amplitude spectrum of the denoised signal. The predictor and target network signals were the amplitude spectra of the wavelet-decomposition vectors of the noisy audio signal and clean audio signal, respectively. The work proposed a denoising speech method using deep learning.

- Blog

- Wiring a bathroom fan and light to one switch

- Dungreed room no entrance

- Wavelet denoising easyhdr

- Page command controllermate

- Automation arraysync

- Run collective soul

- Math magic trick

- Att push to talk phones

- Chimex chicken

- Adobe photoshop elements review

- Cleanx back saver

- Trace my ip

- Manictime professional

- Kensingtonworks software